|

| Source: COS website |

We recently came across a clear, easily communicated road map for implementing cultural change.* We’ll provide some background information on the author’s motivation for developing the road map, a summary of it, and our perspective on it.

The author, Brian Nosek, is executive director of the Center for Open Science (COS). The mission of COS is to increase the openness, integrity, and reproducibility of scientific research. Specifically, they propose that researchers publish the initial description of their studies so that original plans can be compared with actual results. In addition, researchers should “share the materials, protocols, and data that they produced in the research so that others could confirm, challenge, extend, or reuse the work.” Overall, the COS proposes a major change from how much research is presently conducted.

Currently, a lot of research is done in private, i.e., more or less in secret, usually with the objective of getting results published, preferably in a prestigious journal. Frequent publishing is fundamental to getting and keeping a job, being promoted, and obtaining future funding for more research, in other words, having a successful career. Researchers know that publishers generally prefer findings that are novel, positive (e.g., a treatment is effective), and tidy (the evidence fits together).

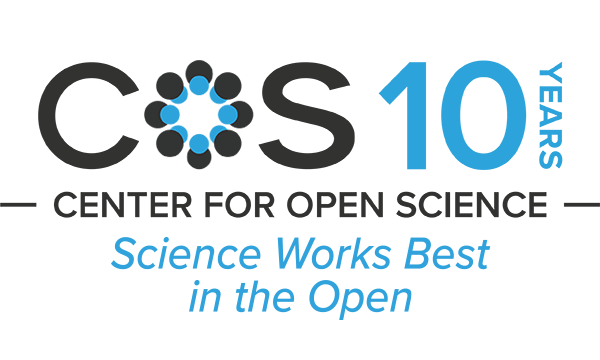

Getting from the present to the future requires a significant change in the culture of scientific research. Nosek describes the steps to implement such change using a pyramid, shown below, as his visual model. Similar to Abraham Maslow’s Hierarchy of Needs, a higher level of the pyramid can only be achieved if the lower levels are adequately satisfied.

| Source: "Strategy for Culture Change" |

Each level represents a different step for changing a culture:

• Infrastructure refers to an open source database where researchers can register their projects, share their data, and show their work.

• The User Interface of the infrastructure must be easy to use and compatible with researchers' existing workflows.

• New research Communities will be built around new norms (e.g., openness and sharing) and behavior, supported and publicized by the infrastructure.

• Incentives refer to redesigned reward and recognition systems (e.g., research funding and prizes, and institutional hiring and promotion schemes) that motivate desired behaviors.

• Public and private Policy changes codify and normalize the new system, i.e., specify the new requirements for conducting research.

Our Perspective

As long-time consultants to senior managers, we applaud Nosek’s change model. It is straightforward and adequately complete, and can be easily visualized. We used to spend a lot of time distilling complicated situations into simple graphics that communicated strategically important points.

We also totally support his call to change the reward system to motivate the new, desirable behaviors. We have been promoting this viewpoint for years with respect to safety culture: If an organization or other entity values safety and wants safe activities and outcomes, then they should compensate the senior leadership accordingly, i.e., pay for safety performance, and stop promoting the nonsense that safety is intrinsic to the entity’s functioning and leaders should provide it basically for free.

All that said, implementing major cultural change is not as simple as Nosek makes it sound.

First off, the status quo can have enormous sticking power. Nosek acknowledges it is defined by strong norms, incentives, and policies. Participants know the rules and how the system works, in particular they know what they must do to obtain the rewards and recognition. Open research is an anathema to many researchers and their sponsors; this is especially true when a project is aimed at creating some kind of competitive advantage for the researcher or the institution. Secrecy is also valued when researchers may (or do) come up with the “wrong answer” – findings that show a product is not effective or has dangerous side effects, or an entire industry’s functioning is hazardous for society.

Second, the research industry exists in a larger environment of social, political and legal factors. Many elected officials, corporate and non-profit bosses, and other thought leaders may say they want and value a world of open research but in private, and in their actions, believe they are better served (and supported) by the existing regime. The legal system in particular is set up to reinforce the current way of doing business, e.g., through patents.

Finally, systemic change means fiddling with the system dynamics, the physical and information flows, inter-component interfaces, and feedback loops that create system outcomes. To the extent such outcomes are emergent properties, they are created by the functioning of the system itself and cannot be predicted by examining or adjusting separate system components. Large-scale system change can be a minefield of unexpected or unintended consequences.

Bottom line: A clear model for change is essential but system redesigners need to tread carefully.

* B. Nosek, “Strategy for Culture Change,” blog post (June 11th, 2019).